If you've ever stored a date in a database, built an API, or debugged a scheduling bug that only appeared in certain countries, you've likely bumped into the relationship between Unix timestamp UTC and local time. The confusion is real, and it causes genuine production bugs. This article breaks down exactly what UTC is, why Unix timestamps are defined in UTC by default, how timezone offsets work, and how to convert timestamps correctly in JavaScript, Python, and PHP. You'll also find the most common mistakes developers make and how to avoid them.

Content Table

Key Takeaways:

- A Unix timestamp always counts seconds from January 1, 1970, 00:00:00 UTC - it has no timezone attached to it.

- UTC is the universal reference point; local time is just UTC plus or minus an offset.

- Converting a timestamp to local time correctly requires knowing the target timezone, not just an offset number.

- DST (Daylight Saving Time) changes the offset for a timezone, which is why hardcoding offsets causes bugs.

What Is UTC and Why Does It Matter?

UTC stands for Coordinated Universal Time. It is the primary time standard by which the world regulates clocks and time. Unlike time zones, UTC has no offset - it is the zero point. It does not observe Daylight Saving Time, and it does not shift for any country or region.

Before UTC, there was GMT (Greenwich Mean Time), and many people still use the two terms interchangeably. Technically, UTC and GMT differ slightly, but for most software purposes they are treated as equivalent. UTC was formally defined by the ITU-R TF.460 recommendation and is maintained using atomic clocks.

For developers, UTC matters because it gives you a single, unambiguous reference point. If your server in Frankfurt and your user in Los Angeles both record an event using UTC, you can always compare those two timestamps correctly. If they each record it in local time without metadata, you have a problem.

Why Unix Timestamps Are Always UTC

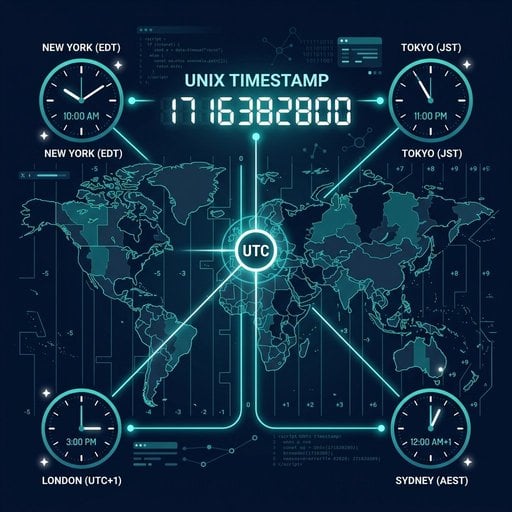

A Unix timestamp (also called epoch time or POSIX time) is defined as the number of seconds that have elapsed since January 1, 1970, 00:00:00 UTC. That anchor point - midnight on January 1, 1970, in UTC - is baked into the definition. There is no version of Unix time that starts in New York local time or Tokyo local time.

This means the answer to "is Unix timestamp always UTC?" is yes, by definition. The number 1700000000 represents the exact same moment in time for every person on the planet. What changes between users is how that moment is displayed in their local timezone.

To understand the foundation of this concept in more depth, read our article on Epoch Time: The Foundation of Unix Timestamps.

This UTC-anchored design is one of the most useful properties of Unix time. Because the number itself carries no timezone information, two systems in different countries can exchange a timestamp and both know exactly which moment it refers to, without any extra negotiation.

UTC vs. Local Time and Timezones

UTC is the reference. Local time is what you get when you apply a timezone rule on top of it. A timezone is not just a fixed offset - it is a named region with a set of rules that can change over time (mostly because of Daylight Saving Time).

For example, "Eastern Time" in the United States is UTC-5 in winter and UTC-4 in summer. The timezone name is "America/New_York" in the IANA timezone database, which is the authoritative source for timezone rules used by most programming languages and operating systems.

The practical takeaway: when you store a Unix timestamp in your database, you are storing a UTC value. When you display it to a user, you convert it to their local timezone. Never store local time as a raw number and assume it is UTC. That is where bugs begin.

How UTC Offsets Work

A UTC offset describes how far a timezone is from UTC. It is written as +HH:MM or -HH:MM. Some examples:

- UTC+02:00 - Central European Summer Time (CEST), used in Germany and France during summer.

- UTC-05:00 - Eastern Standard Time (EST), used on the US East Coast in winter.

- UTC+05:30 - India Standard Time (IST), which is a half-hour offset.

- UTC+00:00 - UTC itself, also used by the UK in winter (GMT).

To convert a UTC epoch time to a local time manually, you add or subtract the offset in seconds. For UTC+02:00, that is 2 * 3600 = 7200 seconds. So if your Unix timestamp is 1700000000, the local time in UTC+02:00 would correspond to 1700000000 + 7200 before formatting.

However, doing this manually is risky because offsets change with DST. Always use a proper timezone library rather than hardcoding an offset. See our guide on How to Convert Unix Timestamp to Date for a deeper look at conversion methods.

Converting Unix Timestamps to Local Time

Here is a concrete example using the Unix timestamp 1700000000, which corresponds to November 14, 2023, 22:13:20 UTC. We will convert it to "America/New_York" (UTC-5 in November) in three languages.

JavaScript

const ts = 1700000000;

// JavaScript Date takes milliseconds

const date = new Date(ts * 1000);

// Display in New York local time

const options = {

timeZone: 'America/New_York',

year: 'numeric',

month: 'long',

day: 'numeric',

hour: '2-digit',

minute: '2-digit',

second: '2-digit',

timeZoneName: 'short'

};

console.log(date.toLocaleString('en-US', options));

// Output: November 14, 2023 at 05:13:20 PM ESTNote that JavaScript's Date object stores time internally as UTC milliseconds. The toLocaleString method with a timeZone option handles the offset and DST rules for you.

Python

from datetime import datetime, timezone

import zoneinfo # Python 3.9+

ts = 1700000000

# Create a UTC-aware datetime from the timestamp

utc_dt = datetime.fromtimestamp(ts, tz=timezone.utc)

# Convert to New York local time

ny_tz = zoneinfo.ZoneInfo('America/New_York')

local_dt = utc_dt.astimezone(ny_tz)

print(local_dt.strftime('%Y-%m-%d %H:%M:%S %Z'))

# Output: 2023-11-14 17:13:20 ESTThe key here is using datetime.fromtimestamp(ts, tz=timezone.utc). If you omit the tz argument, Python uses your server's local timezone to interpret the timestamp - which is a common source of bugs.

PHP

$ts = 1700000000;

// Create a DateTime object from the Unix timestamp (always UTC)

$dt = new DateTime('@' . $ts);

// Set the target timezone

$dt->setTimezone(new DateTimeZone('America/New_York'));

echo $dt->format('Y-m-d H:i:s T');

// Output: 2023-11-14 17:13:20 ESTIn PHP, the @ prefix when constructing a DateTime object tells PHP to treat the value as a Unix timestamp (UTC). Without it, PHP may interpret the string using the server's default timezone setting.

Common Mistakes Developers Make

Even experienced developers get tripped up by timezone handling. Here are the most frequent issues:

1. Assuming the server's local time is UTC

If your server is set to "Europe/Berlin" and you call time() in PHP or datetime.now() in Python without a timezone argument, you get local time - not UTC. Always be explicit about UTC when recording timestamps.

2. Hardcoding UTC offsets instead of using timezone names

Using +02:00 as a fixed offset for Germany will break in winter when Germany switches to +01:00. Always use named timezones like Europe/Berlin so the library can apply the correct DST rules automatically.

3. Storing timestamps as local time strings without timezone metadata

A string like 2023-11-14 17:13:20 in a database is ambiguous. Is it UTC? EST? IST? If you store timestamps as Unix integers or as ISO 8601 strings with a UTC offset (e.g., 2023-11-14T22:13:20Z), the meaning is unambiguous. See our comparison of Unix Timestamp Format vs ISO 8601 for guidance on which to choose.

4. Ignoring DST during scheduling

If you schedule a job to run at "09:00 every day" by adding 86400 seconds to the last run time, it will drift by one hour on the days DST changes. Use a cron library or scheduler that understands timezone rules.

5. Confusing milliseconds and seconds

JavaScript uses milliseconds for its Date object, but most Unix timestamps are in seconds. Passing a seconds-based timestamp to new Date() without multiplying by 1000 gives you a date in January 1970. This is covered in detail in our article on Seconds vs Milliseconds vs Microseconds.

Conclusion

Unix timestamps and UTC are inseparable by design. The timestamp is always a UTC value - a count of seconds from a fixed UTC anchor. Local time is just a display format, not a different kind of timestamp. The safest approach is to store everything as UTC, convert to local time only at the point of display, and always use named timezones rather than hardcoded offsets. Follow these rules and the majority of timezone-related bugs simply stop happening. For best practices on storing these values, see our guide on Unix Timestamps in Databases.

Convert Any Unix Timestamp to UTC or Local Time - Instantly

Paste any Unix timestamp and see it converted to UTC and your local timezone in one click. Free, no signup required, and works with seconds and milliseconds.

Try Our Free Tool →

Yes. By definition, a Unix epoch timestamp counts seconds from January 1, 1970, 00:00:00 UTC. The number itself carries no timezone - it is a UTC value. Timezone only becomes relevant when you convert the timestamp into a human-readable date and time for display purposes.

Use your language's built-in timezone library. In JavaScript, use toLocaleString with a timeZone option. In Python, use datetime.fromtimestamp(ts, tz=timezone.utc).astimezone() with a named timezone. In PHP, create a DateTime from the timestamp and call setTimezone(). Always use named timezones, not raw offsets.

This usually happens because a function is using the server's default timezone instead of UTC when interpreting the timestamp. In Python, omitting the tz argument to datetime.fromtimestamp() uses local system time. In PHP, not using the @ prefix has the same effect. Always be explicit about UTC at the point of creation.

A UTC offset is the number of hours (and sometimes minutes) that a timezone is ahead of or behind UTC. For example, UTC-05:00 is five hours behind UTC. When converting a Unix timestamp to local time, the offset is applied to the UTC value. Because offsets change with DST, you should use a named timezone rather than a hardcoded offset.

Yes, and this is one of the main advantages of Unix timestamps. Because every timestamp is a UTC value, you can compare any two timestamps directly as integers. A higher number always means a later point in time, regardless of where the timestamps were generated. No timezone conversion is needed for comparison.